Deployment Is Coming! Anyone at the Helm?

Let’s face it, deploying applications is hard, deploying Elixir applications harder. Every time I have to standup a deployment environment for my clients, my blood pressure goes thru the roof and I infallibly have to reach out for a healthy helping of Prozac patches. Luckily, there has been a lot of progress from the Elixir community in regards to deployments, notably with the great efforts of Paul Schoenfelder, but this process is still somewhat painful…

I’ll admit, I am biased and think Kubernetes and Helm are the duck’s nuts when it comes to deploying applications and feel they solve several provisioning/productivity pain points. In this post, I will share with you my current Elixir deployment strategies and recipes.

This is by no means the end all be all guide when it comes to Elixir deployments. My hope is you’ll find below some interesting nuggets that spice up your own deployment recipes.

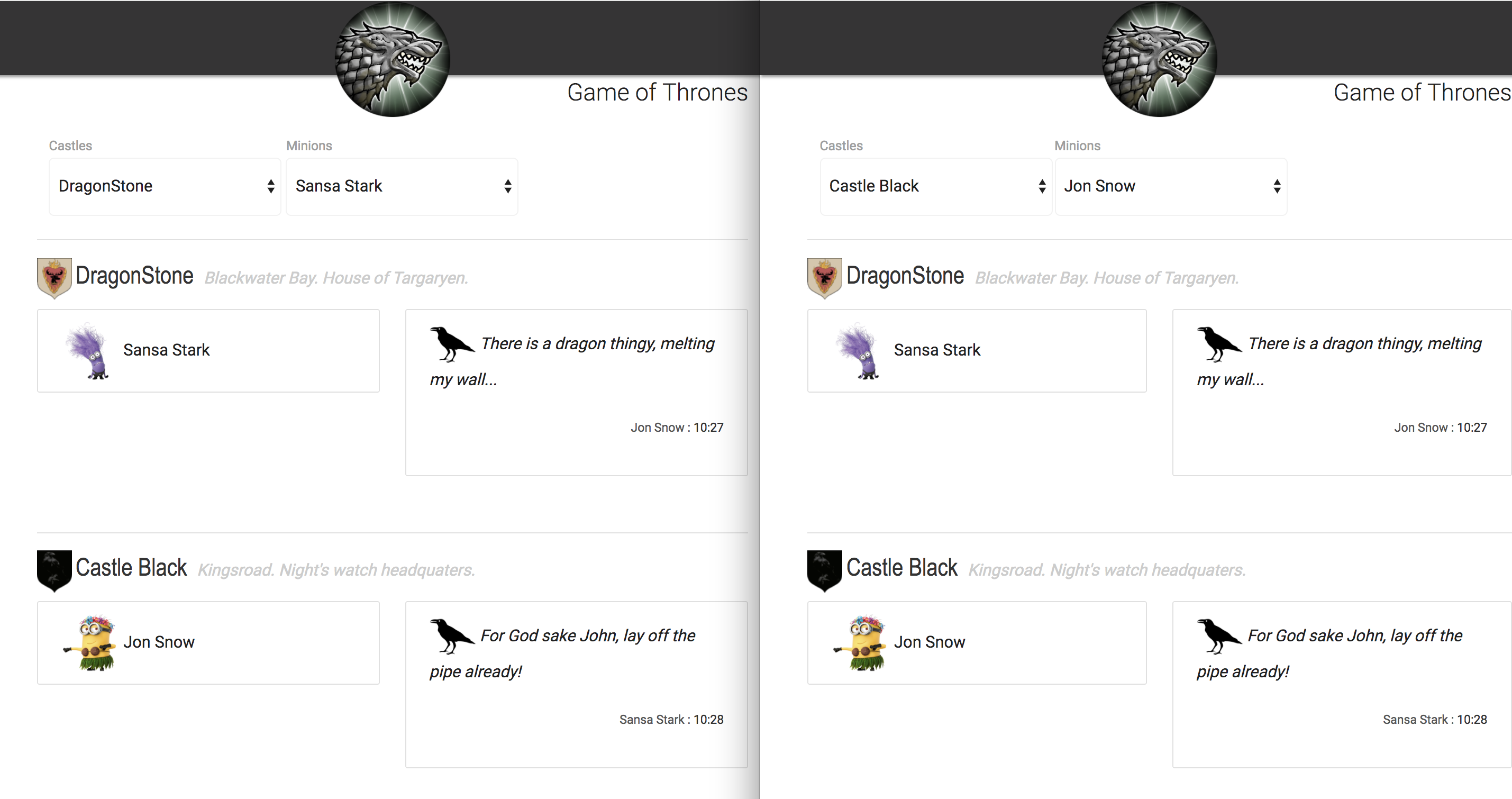

In this installment, we will deploy my now world famous Game of Thrones app. This is an Angular frontend, which interacts with a Postgres database using Phoenix. The application is composed of Castles and their affiliated Minions. Inter-Castle communication is achieved by dispatching Ravens leveraging the awesomeness that is Phoenix presence and channels (Thanks Chris!!).

When I look at orchestration frameworks, one key thing for me, is having the ability to run things locally on my machine in the same way they’ll run in production. In my mind, this is important for tuning your deployment recipes and dependencies, sharing with teammates, but also prevents to a large degree, the typical Holly shit! when pushing to production. Honing your deployment recipes along with your code from the get-go, is in my mind the true essence of DevOps, as everyone in the team is running mini versions of the actual deployments on a day to day basis and eliminates surprises either comes D-Day or when pushing to prod several times per day.

I imagine by now, you’re psyched out of your mind to deploy the killer app! The following demonstrates a steel thread for a typical Phoenix deployment.

Fast Track…Is Yara at the Helm?

Standing up the Game of Thrones application merely involves the three following commands:

# Provision the GoT Postgres DB

helm install --name got -f helm/pg.yml stable/postgresql

# Provision the GoT service

helm install --name got-svc -f deploys/helm/got-svc.yml imhotep/got-svc

# Provision the GoT UI

helm install --name got-ui -f deploys/helm/got-ui.yml imhotep/got-ui

The cool thing here is these commands not only apply to your dev env but also wherever your cloud destination might be namely AWS, Azure, DigitalOcean, GoogleCloud, PappysSuperDuperCloud…

Hopefully I have just wetted your deployment appetites, so let’s highlight what’s involved here to make this a reality.

If my prose annoys you, you can directly dial in Game of Thrones on the hub.

The Gory Details…

Now, before you hate me, there is a bit of a setup and installs necessary to achieve the above deployment commands. Good news for OSX users, as most of it is now a Brew away and you only have to perform most of these steps once, so bear with me…

-

Hypervisor

In order for Kubernetes to run on your local machine, you need to install a VM tech such as VirtualBox, VmWare Fusion, Xhyve,… I’ve find that Xhyve is lighter weight and make things simpler.

Make sure to follow all install instructions!

-

Kubectl

Kubernetes leverages a CLI called kubetcl that allows you to interact with your cluster.

# Install Kubectl brew install kubectl -

MiniKube

To run a Kubernetes installation on your local box, you will need to install Minikube. This will run a single node Kubernetes cluster on your machine.

# Install Minikube brew install minikube # Starts minikube with 4 cores, 2Gb of RAM using the Xhyve hypervisor minikube start --cpus 4 --memory 2048 --vm-driver xhyve -

Verify!

Check to make sure everything is running as it should!

# Check for Kubernetes nodes - In minikube you will only have one! Make sure node is in ready state. kubectl get nodes -

Helm

Helm is the Kubernetes package manager. For folks familiar with Chef and Puppet, Helm provides a set of vetted Kubernetes recipes to deploy various pieces of tech. In the Helm world, these are called charts. We will use the Postgres chart to provision our database.

# Install helm brew install kubernetes-helm # Initializes helm and installs Tiller to manage charts deployments helm init # Search charts repository for postgres helm search postgresNAME VERSION DESCRIPTION stable/postgresql 0.8.1 Object-relational database management system (O...Now we will provision the Postgres database using a sample yaml configuration from our github repo.

In our Postgres chart configuration we override a few settings as follows:

# Setup Db credentials... postgresUser: fernand postgresPassword: Blee! # Persist volume size persistence: size: 20M # Resource consumption resources: requests: memory: 256Mi cpu: 100m # Service type service: type: NodePort# Provision the database helm install --name got -f deploys/helm/pg.yml stable/postgresql # Verify by checking the running pods. After a little while you should see a got-postgresql-xxxx pod in a running state. kubectl get podsThere is a couple things to note here. For simplicity sake, I’ve made the database externally accessible so you can interact with it locally. In a prod situation, this should not be the case! The sample pg configuration file exhibits db credentials in plain text. These creds should also be hidden. They’re multiple options here such as storing secrets such as encrypted git repo or using the excellent HashiCorp Vault.

For Christ Sake, Release Them Ravens already!

If you’ve glanced at the repo, you may notice a bit of a departure from the traditional Phoenix application setup. Our distribution is an umbrella app. The Game of Thrones application has two concerns: 1) Interacting with a persistent layer 2) serving the application using HTTP/WS. Thus we’ve composed two Elixir applications namely store and svc. The store is concerned about storing/retrieving records from our persistent layer, while the svc handles http request and web-sockets communication. If later on, we decide to provide GRPC (the next Jedi!) as a communication interface, we can still reuse our store app across the multiple communication layers. I find this approach easier to conceptualize and manage especially for larger projects.

We will be using Distillery coolness to build our release which we’ve added to our dependencies. There is currently a bit of a manip to enable environment variables for our config files to be injected into the release. We use REPLACE_OS_ARG and sys.config to inject configuration parameters when building the release. I think there are better ways to do this, if you don’t want to drop down to Erlang, but haven’t explored as of yet…

To build our release, we use Alpine-Elixir from Derailed (Who is that clown?). Now in order to build an Erlang release, one must compile the application code on the same machine/distro the application runs on. This is typically a two step process 1) generate a release tar file and stash it 2) use the release tar and generate a runnable docker image. We are in luck here and use the new Docker Multistage feature to build our Game of Thrones image in a single swoop as shown below.

# Compile Release Stage

# Compile Release Stage

FROM derailed/alpine-elixir:1.5.0

MAINTAINER Fernand Galiana

ENV MIX_ENV=prod

ARG APP=got

ARG VERSION=0.0.1

RUN mix do local.hex --force, local.rebar --force

WORKDIR /app

COPY . .

RUN mix do deps.get, deps.compile ;\

mix release --env=prod ;\

mkdir release ;\

cd release ;\

tar -xzf /app/_build/prod/rel/$APP/releases/$VERSION/$APP.tar.gz

# Runtime Stage

FROM alpine:3.5

ENV MIX_ENV=prod \

PORT=4000 \

REPLACE_OS_VARS=true

ARG APP=got

ARG VERSION=0.0.1

WORKDIR /app/bin

COPY scripts/run.sh /app/bin

COPY --from=0 /app/release /app

RUN apk add --no-cache --update bash openssl ca-certificates

ENTRYPOINT ["/app/bin/run.sh"]

The resulting Docker image to run our Elixir application is a smashing 37Mb!

REPOSITORY TAG IMAGE ID SIZE

quay.io/derailed/got 0.0.2 f238f4e4f1b5 37MB

Feeling a bit mixed up? You should be. A side effect of deploying a release version of your app entitles you to no longer have access to your beloved crutch aka Mix ;-(

So in order to run some of our usual tasks such as migrations, seeds or post deploys, you must now switch out to running commands from your build artifact. We are in luck here as the release binary takes ad-hoc commands that one can run on a bundled release. Once the release is in its destination container, we can now ensure the db exists, the latest migrations and seeds are applied by issuing the following command:

got command Elixir.Svc.Commands.Db update_db

Next we will use our new docker image and provision our application using Helm.

Helm is a templating engine that allows you to parameterize your Kubernetes manifests and inject values using Go templates. This is a bit verbose to explain here, so please refer to the repo to look at how the GoT charts are composed.

Next thing, we need a repo to host our charts so that our team can install. Quay.io provides a new feature to allow one to push a Helm chart and serve it up to makes it available for our team to install. At the time of this writing, there was a bit of a disturbance in the Quay force hence we had to resort to our own helm chart registry. It is simply a github pages powered site with the chart tar balls and an index, nothing special.

# Tell helm to add a new repo to fetch charts from

helm repo add imhotep http://imhotep.io/helm-charts

# Fetch the latest and greatest

helm repo update

Ready to deploy your apps? Yes please!

# Now install our new GoT Service chart

helm install --name got-svc -f deploys/helm/got-svc.yml imhotep/got-svc

# Next install our new GoT UI chart

helm install --name got-ui -f deploys/helm/got-ui.yml imhotep/got-ui

And finally…

# Access the Game of Thrones service URL

curl -XGET `minikube service got-svc --url`/api/castles

# Launch the GoT UI in your favorite browser!

minikube service got-ui

Given my advanced age and that I can’t remember what I was doing 5 mins ago, I repurpose the wonderful Make utility to synthesize commands and produce the requested artifacts. I find it a nice convenience vs using straight bash or mix tasks. As such, when the code changes and I am ready to push out a new release, I can simply issue the following command:

# Generate a new release and update the GoT service using rolling updates

make svc-push svc-update

Sheesh! Are We Done Yet?

If you’re still reading this you may think to yourself “Bof! The French man needs to lay off the pipe!”. This is a lot here to take in and digest as well as multiple tools to master. I certainly understand the feeling! The blessing is most of the CLIs are actually simple to learn. Deployments are complex and if you are building something useful, it will unlikely involve just one piece of tech. I also know, the times of building apps and tossing over the fence for Ops to deploy and support are over and done.

As developers, it falls in our responsibility to own the bulk of deployments and support it. I think that Kubernetes gives you uniform means to manage your deployments locally and in the cloud. To boot, your application alone is not enough, most likely you will want to incorporate third party tech for searching, monitoring, alerting, tracing, etc… Most of which will unlikely be Elixir GenServers…

Once you’ve got your base K8s chops in order, you can easily venture into incorporating with

other stacks and leverage best of bride techs using the same familiar mechanisms. They are a lot

of talks about giving up hot code reloads when going to the Docker side. It’s true. Though

a nice Erlang convenience, I thing there is much more to simply injecting new features at runtime

as these may affect other parts of your deployment stack and not just your Elixir code. In the end,

I find cutting a new release and leveraging Kubernetes rolling updates across your entire deployment,

while allowing for quick rollbacks is IMHO a much cleaner, predictable and trackable solution.