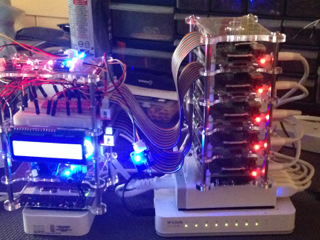

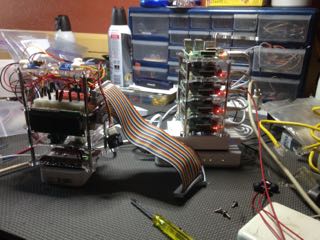

PiKube anyone? Yes please!!

IOT means different things to different folks. For me, I’ve always loved doing home automation projects. As I see it, most IOT projects are setup do get you going quickly by either burning a canned SSD card image or permanently shackle your computer to your micro-controller. To that I say: Lameness and I am unanimous on that!

Doing home automation to me, means that you’re going to have a bunch of devices spread out thru out your humble abode. That in itself is great, but having each device operate unanimously is just plain Jurassic! By that I mean, meshing devices and having them talk and interact with each others is in my mind the Holy Grail!

There are some, that call me… PiKube!

In the last year or so, I have been using Kubernetes to orchestrate different application clusters. As of release 1.4, the Kubernetes high working crew, added the kubeadm cli that makes spinning master and minions a breeze. This event rekindled my interest in running K8s on my RaspberryPi cluster. Thanks to the excellent work of Lucas Käldström who ported Kubernetes on arm, it was now a reality to spin up K8s on my cluster. My efforts were also catalysed by an excellent presentation by Ju Liu at ElixirLDN earlier this year.

Though this in itself is pretty rad, I wanted to push the envelop a bit further…

Can I run dockerized version of my IOT bots orchestrated by K8s?

The answer: You Bet!

So why would one in her/his right mind would want to do that? As with any piece of software, hardware failures are also a matter of not wat? but when. Having an orchestration framework that continually monitors minions and ensure preconditions are maintain is ideal in this environment. To boot, service discovery, node selection and custom scheduler gives you great flexibility in managing an IOT driven cluster.

I will post soon the steps to spin up a K8s Pi cluster, but in the mean time, indulge me by `whetting your appetite…

I’d like to jive with you a bit on how to dockerize your IOT app. For this example, we will be using dockerized Elixir bots. Though, some rightfully will argue that running a VM on already limited resources hardware is less than ideal. I’ve found that leveraging Phoenix channels/presence Thanks Chris! to have bots discover new hardware and communicate with each others and even UI frontend components is indeed the ducks nuts!!

Dockerizing a Bot…

There are many bridges to cross here, that will most certainly put your Prozac patches bill to a new record high. Futhermore, finally getting stuff working and being jolted, late at nite, by a blinking LED or a buzzing speaker, could surely send you on to your untimely death. Remenber, I did say Holy Grail but not Early Grave!, so thread lightly…

Docker on ARM is a beast of it’s own. As it stand you can’t simply build the image on your mac and toss it over on the Pi and call it done. I understand QEMU can help here, but figured why not try to just install and run Elixir directly on the Pi’s and then build a release and dockerize from there.

The install took some time on my Rpi3, so I took off, had a couple of kids and came back to a nice $ prompt. And boy, am I glad I did, it is just cool to fire off iex on the Pi and even run Phoenix directly from the command line. Moreover once I’ve built the necessary pieces ie Erlang/Elixir on one of my Pi’s, getting this env running on the other Pi’s was just an rsync away. I am using HypriotOS which gives you a Debian distro and Docker right off the bat. Erlang is supported on that distro, but as of this writing rel17 was all one could find. I needed 18 or better to run the latest Elixir/Phoenix.

Configuring the application for the bot was simple. I’ve build a little Elixir ExBot library (coming to you soon!) to configure a robot, like so:

config :ex_bot,

i2c: [

channel: 1,

address: 0x48,

devices: [

[channel: 0, name: :photo],

[channel: 1, name: :temp],

[channel: 2, name: :mic]

]

],

gpios: [

[pin: 17, name: :buzz, direction: :output],

[pin: 23, name: :laser, direction: :output],

[pin: 5, name: :merc, direction: :input]

]

The Dockerfile for one of my bots looks like this.

FROM derailed/alpine-arm-elixir:1.3.4

ENV APP_DIR /app

ENV HTTP_PORT 4000

ENV MIX_ENV prod

ENV ERL_EI_INCLUDE_DIR /usr/lib/erlang/usr/include

ENV ERL_EI_LIBDIR /usr/lib/erlang/usr/lib

WORKDIR $APP_DIR

COPY ./ $APP_DIR

RUN set -ex; \

mkdir /root/.ssh; \

apk --no-cache add --virtual .build.deps git make gcc erlang-dev libc-dev linux-headers

mix local.hex --force; \

mix local.rebar --force; \

mix deps.get; \

mix deps.compile; \

mix compile; \

apk del .build.deps

EXPOSE $HTTP_PORT

CMD ["mix", "phoenix.server"]

Which produces ~70Mb image. Think this can be tweaked down further by just building an Erlang beam file. But thought, good enough for now!

The next question that come to me was:

How does one interact with the host system dirs from a dockerized image?

This one took me for a bit of a loop. Docker does offer a –privileged flag for that purpose.

Firing off my bot

sudo docker run -it --privilege sensors-bot

Worked! Splendid, as I am now able to access the pins on the microcontroller and interact with my devices. So then I thought, cool deal, Kubernetize that shit and we’ll be Happy as a Hypo!

Boy was that wrong. Tho K8s does offer the ability to turn on privilege mode on the pod, it turns out to be a complete dud. A quick docker inspect away, revealed unsettling diffs. Me think devices: null vs devices: [] was the culprit here between docker running from the command line vs the k8s pod??

So then I thought:

No deal. What if I just mount the volumes directly within the pod?

Worked! Note to self: Don’t forget the symlinks!

A K8s sample manifest…

kind: Deployment

apiVersion: extensions/v1beta1

metadata:

name: sensors

labels:

app: sensors

spec:

replicas: 1

template:

metadata:

labels:

app: sensors

spec:

containers:

- name: sensors

securityContext:

privileged: true # => Run the container in privileged mode

image: sensors:0.0.3

ports:

- containerPort: 4000

name: api

volumeMounts:

- mountPath: /dev/i2c-1

name: dev-i2c-1

- mountPath: /sys/class/gpio

name: sys-class-gpio

- mountPath: /sys/devices

name: sys-devices

volumes: # => Map i2c pins and gpio pins

- name: dev-i2c-1

hostPath:

path: /dev/i2c-1

- name: sys-class-gpio

hostPath:

path: /sys/class/gpio

- name: sys-devices

hostPath:

path: /sys/devices

And that’s a wrap!

So there you have it, we now have a build that allows applications to interact with the host hardware! To boot, these applications are monitored and communicate with each others using dns on the cluster. From here, sky is the limit, you can now deploy your IOT contraptions and have K8s orchestrate your cluster by running your pods on the right boards on your cluster by leveraging annotations and labels. Moreover, you get all the K8s goodies, service discovery, rolling updates, monitoring, etc…

NY Times says: This shit is the shit!

If I’ve prosed this piece right, I believe, I know what some of you will find in your Xmas stockings this year ;)

Happy Holidays to you and yours!!

– Bad Santa