Trimming The Sails With Istio

Plotting The Chart…

Kubernetes ensures the contract one establishes between the application and the infrastructure is honored at all times. However, having dabbled on this scene for awhile, and in particular in the renovation space when it comes to monoliths, I feel we need a better handle on the management problem we’ve introduced. The single moving part app is being replaced by many, resulting in several components that must collaborate in unison to provide customer solutions. In this space, workflow, observability and fine grained controls are musts and devs need better tooling to handle herding microservices in the wild.

Moreover, you may not have developed some of the components, your application relies on. It seems we are entering a commodity era in CS. Things like machine learning, voice recognition, GIS, … are being commoditized to provide enhanced application capabilities, and alleviate development costs. Though newer in the CS space, this is not a new concept. Does your mechanic rebuild your carburator or just replace it with a new one?

Of recent, I’ve been feeling differently on how I write apps. I’ve started thinking of applications as decorated business logic. The meat of your application is the business logic not how it’s served. Hence why do we routinely start out coding that way front and center? As such HTTP or GRPC should be cross-cuts of the application and not starting points. This paradigm applies at the callstack level, all the way to the service layer. For the old timers out there, this should sound familiar as this is the core premise of Aspect Oriented Programing. Whether one wants to log, measure or trace calls, these should be orthogonal concerns with minimal mucking (read none!) of the business logic code.

Istio took this very premise and made it actionable at the service mesh level! How cool is that?

Sail Into The New Year!

According to the marketeer verbiage, Istio is a platform to connect, manage and secure microservices. Yes I know, this hardly wetted my dev appetite either ;(

Istio is the output of a collaboration between Google, IBM and Lyft. Just like aspects allowed us to inject cross-cuts into the callstack, Istio can inject concerns into your services communication channels at runtime. This allows you to not only gather metrics and tracing info at the service level but affords changing traffic patterns within the service mesh dynamically. To me, this is totally mind blowing!!

Hence if you look at your application as a collection of collaborating services, Istio can be seen as the Maestro that keeps the beat on how service ensembles communicate to form an application. The heavy `lyfting is handled by Envoy from Lyft (million+ req/s in prod for the last 2 years!). This is the core tech in Istio, a configurable ingress/egress controller that intercepts network traffic going in/out of the cluster as a whole and similarly at the service level. Of interest, is that by leveraging CustomResource Definitions (CRDs), Envoy has been integrated into the Kubernetes eco-system and thus feels like a native component.

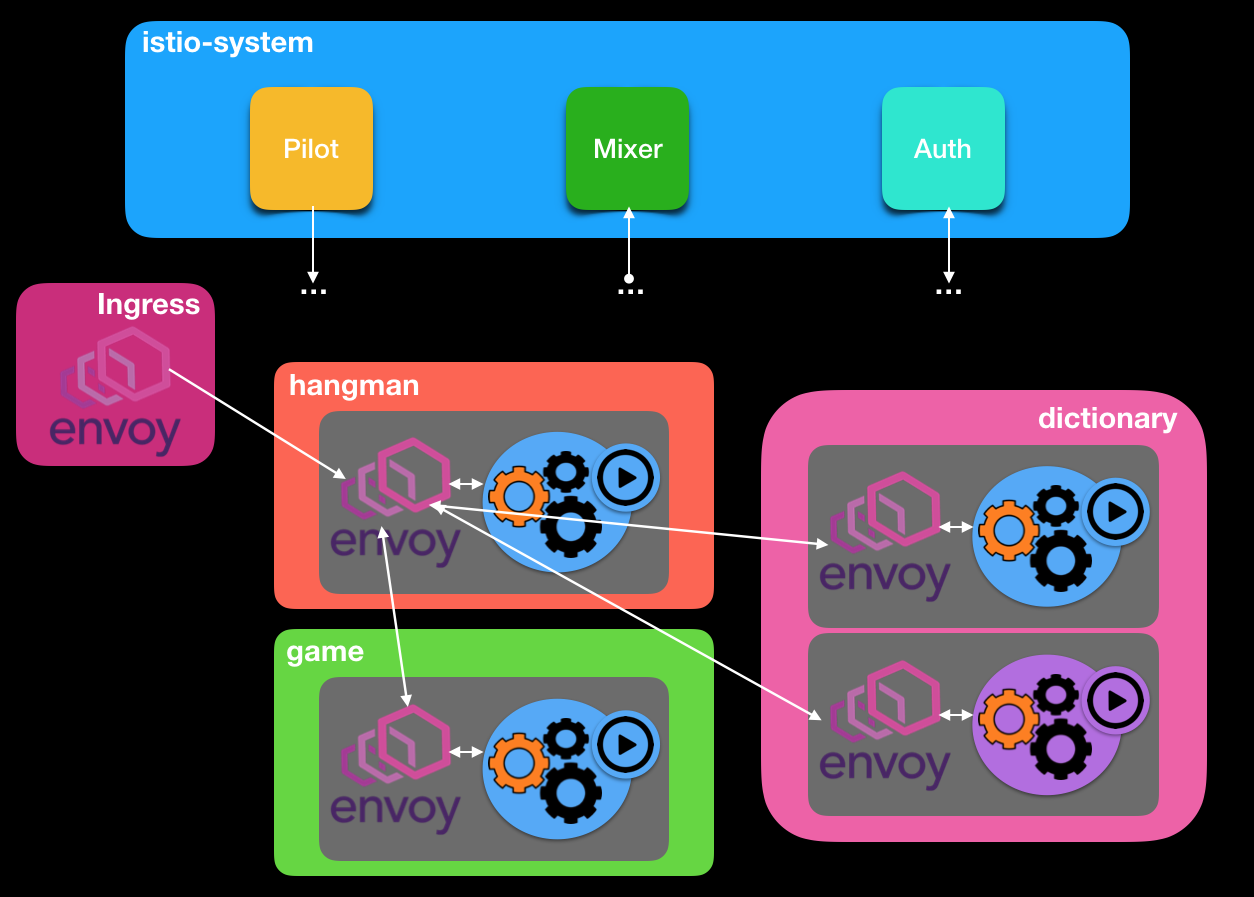

The just of this integration is an Envoy container is launched upon creation that lives along side your service container in the same pod (Sidecar pattern). Envoy is in charge of controlling traffic coming in/out of your service and hence can monitor and side-effect behavior. This does come with a cost, as beside having to run a collocated container, you also need to run additional services at the master level to create an Istio aware cluster. In a nutshell, the extra services are composed of Auth, Mixer and Pilot.

- Auth - provides service identify and tls exchanges to verify authn and authz using service accounts

- Pilot - manages service mesh traffic policies across Envoys

- Mixer - manages metrics and tracing collections

Additionally you can install Prometheus, Zipkin, ServiceGraph and Grafana and you’ve enabled all sorts of cool metrics/dashboards to give yourself superpowers!

By leveraging Kubernetes initializers, extra behavior can be injected into a pod manifest. So you can keep authoring your deployment manifests the usual way and they will be made Istio aware during creation using the familiar kubeclt CLI. Your pod is decorated with 2 additional containers. One with an Envoy that manages calls going in/out of your container and an ephemeral init container that sets up ip-tables rules to reroute traffic thru the pod’s Envoy. IMHO that magic is the Ducks Nuts!

For those wanting to play around with the recipes below, Google made it very easy to create a new Istio aware K8s cluster using Deployment Manager.

In the next section we will look at different use cases that illustrates some of Istio’s awesomess…

Should my prose bore you… the code and K8s/Istio manifests are in the hangman link at the end of this article ;)

Get A Line?

Let’s face it, CLIs are the new black! For this writing, I thought it would be cool to author a Hangman game CLI application. It’s been a while since I had written a CLI so I figure why not? To boot, I’ve always wanted to take out GoKit for a spin by the very talented Peter Bourgon. Not surprisingly, GoKit is a clever concept and very much inline with the philosophy at play here namely decorating and cross-cutting!

For the Hangman game, I’ve devised the app into 3 separate services:

- Dictionary - to fetch new words

- Game - to manage the game state

- Hangman - The actual game and score tracker

Below is what the game might look like in a Istio enabled Kubernetes cluster. Note the Envoys at the edge and the decorated pods.

Canaries In The Cloud Mine…

Canary builds are a common practice to kick out the tires prior to an official release. It allows a team to vet an implementation among friendlies before unleashing the hounds. Though one can deploy canary builds directly with K8s, additional leg work is required… Typically you will see folks standing up new environments or namespaces to perform this task. Wouldn’t it be cool if one could reuse their existing deployments to enable this activity?

So let’s say we have a new iteration of our hangman game where we want to introduce custom dictionaries. Our engineering team has been hard at work and released a variation of the game for the holidays, codename Hangman Orange where we introduce a new Trump flavored dictionary with colorful guess words: Moron, Fakenews, Lightweight,…

Use Case #1: Do Mess With The Do!

In this case, we would like to gently ease the new version to our customer base. Merely deploying v2 of the dictionary will round robin with our new dictionary with no predictability of which customer will see the new release. So we actually want to control the flow as to only expose say 5% of our customer population.

kubectl create -f istio/rules/dic-95-5.yml

# dic-95-5.yml

apiVersion: config.istio.io/v1alpha2

kind: RouteRule

metadata:

name: dic-80-v2

spec:

destination:

name: dictionary

route:

- labels:

version: v1 # <- current version

weight: 95 # <- 95% of the traffic

- labels:

version: v2 # <- Hangman Orange version

weight: 5 # <- 5% of the traffic

Having gathered user feedback on the new rev, we can now gently ease-in the new code base, by merely changing the traffic weight of the Envoy’s rule and finally rollout our new rev to 100% of the traffic.

All this is on the fly and with no application code changes!

Use Case #2: Does Polly Want a Cookie?

Say you want finer control over your new deployment where you only want to divulge the new rev to friendly customers. Envoy gives you the ability to achieve this, by inspecting headers/cookies and direct traffic to your new rev when matches are found. In the case below, for traffic coming from the game service to the dictionary, only customers with a cookie set to **dic=trump” will get to see the new release.

kubectl create -f istio/rules/cookie.yml

# cookie.yml

apiVersion: config.istio.io/v1alpha2

kind: RouteRule

metadata:

name: dic-cookie-v2

spec:

destination:

name: dictionary

match:

source:

name: hangman

request:

headers:

cookie:

regex: "^(.*?;)?(dic=trump)(;.*)?$"

route:

- labels:

version: v2

weight: 100

UseCase #3: Unleash The Sloths!

Network latencies are always a matter of when not if. When calling third party apis or your own, service responses may sporadically slow to a grinding halt. How will the rest of your stack behave under these conditions? Does your stack gracefully degrade? Do you get alerts? Or do you get teh dreaded phone call?

Envoy provides mechanism to apply virtual brakes and allows you to visualize how this ripples thru your service stack. Say for example our primordial dictionary suddenly suffers from a `sloth disease, where some the requests may now take several seconds. What happens to our dependent services and ultimately to the users of our game?

kubectl create -f istio/rules/delay.yml

# delay.yml

apiVersion: config.istio.io/v1alpha2

kind: RouteRule

metadata:

name: dic-delay

spec:

destination:

name: dictionary

route:

- labels:

version: v1

httpFault:

delay:

percent: 50 # <- Affect 1/2 the traffic

fixedDelay: 10s # <- Sloth set to 10s delays

If you’re leveraging prometheus metrics in your stack, you know writing custom alerts for your system can be tricky. This gives you a great way to test that they will be triggered under the right conditions and that you don’t send out false-pos or false-neg!

UseCase #4: Oh Just Let It Fail!

Let’s emulate failures on our new service. Here we setup up a policy where 50% of the dictionary requests coming from our game service fail. This gives you a prime opportunity to see the ripple effect in your stack and see how your implementations does or doesn’t cope with the failures.

kubectl create -f istio/rules/delay.yml

# partial_failure.yml

apiVersion: config.istio.io/v1alpha2

kind: RouteRule

metadata:

name: dic-fault

spec:

destination:

name: dictionary

match:

source:

name: hangman

httpFault:

abort:

percent: 50 # <- for 1/2 the traffic

httpStatus: 400 # <- Respond with a 400 status code

UseCase #5: Once Again With Feeling!

Implementing retry logic in any code base can be tricky. Each language possesses their own libs and approaches. Shouldn’t this be best implemented as a cross-cutting concern at the service boundaries vs implemented within each services? Also can you dynamically configure timeout/retry behaviors?

You bet! Once again Envoy to the rescue. Retry logic can be externalized and generalized ie no more language specific implementation and code bloat!

Here if our dictionary calls fail or times-out, we can implement retry policies across the mesh!

kubectl create -f istio/rules/retries.yml

# retry.yml

apiVersion: config.istio.io/v1alpha2

kind: RouteRule

metadata:

name: dic-retry

spec:

destination:

name: dictionary

route:

- labels:

version: v1

weight: 100

httpReqRetries:

simpleRetry:

attempts: 3 # <- Retries the call 3 times

perTryTimeout: 1s # <- wait 1 secs before retries

Mind The Lolls!

As of the writing Istio is in Alpha. There are a few things, I’ve stumbled into when playing with the 0.4 version.

-

Minikube. While playing around with Istio on my local cluster, I’ve noticed that Pilot will at times become hard of hearing. Tho installed policies still worked, I could no longer add/delete/list policies

-

Policy Validation. Beware kubeclt does not perform validation on the policies! If you have errors in your configuration rules, you will not get notified. So I’ll recommend using istioctl CLI directly to make sure your rules are cool!

-

Envoy Lolls. I’ve noticed that pushing policies destined for cluster’s edge controller at times had no effect. Debugging such conditions could be tricky!

-

HTTP 1.1/2.0/WebSocket traffic only!. So beware of the tech stack you are deploying. For instance, I had issues deploying postgres where the probes failed as pgReady could no longer access the database. In these situations you can exclude a pod from being decorated, but you’ll loose the knobs!

-

Extensibility? Envoy is written in C++. To be honest C++ was my least favorite language ;( Tho I understand the need for speed, I am a bit concerned with extensibility improvements in the OS community at large…

Sheeting Out…

There is much more that can be discussed on this subject, but I hope I’ve wetted your appetite enough to start your own investigation. IMHO this framework is changing the game on how we deploy for workflow, observe and test clusters. Istio brings a lot to the table when it comes to securing services and traffic combing. To boot it comes bundled with custom dashboards and metrics that gives a leg up on observability into your clusters with best of breed techs like Zipkin and Prometheus.

In a world of microservices and commoditization of components, having the ability to further dial-in to customize and test your application workflows with zero code changes and in a decorative manner is pure genious!

Special Atta-Boy! in full effect to the Envoy team for building and sharing their awesome tech and to Lyft, Google and IBM for making it accessible to the Kubernetes community!

Happy Sails!